How AI Changes the Way We Conduct Research?

The scientific enterprise stands at what MIT Associate Professor Rafael Gómez-Bombarelli calls “a second inflection point”. For centuries, research has been driven by human curiosity, painstaking experimentation, and the slow accumulation of knowledge across generations. Now, artificial intelligence — powered by exponential growth in computing, the explosion of available data, and breakthroughs in algorithmic design — is poised to fundamentally reshape how hypotheses are formed, how experiments are designed, and how discoveries are communicated. The question is no longer whether AI will change research, but how profoundly and how quickly (Chen et al., 2025).

We start by examining the multifaceted ways in which AI is already transforming the research landscape, drawing on insights from leading universities, major technology companies, comprehensive surveys, and landmark policy reports. It explores the promise and the peril of AI-driven research — and introduces the emerging tool Gatsbi that are making these capabilities accessible to researchers worldwide.

The Computational Revolution Behind AI-Driven Research

The foundational algorithms behind modern AI are not new. As Dr. James Fergusson, Director of the Infosys-Cambridge AI Centre at the University of Cambridge, explains: “What’s changed isn’t the methods — we’ve had most of those since the 1970s. What’s changed is that we now have enough computing power and data to make them work”. If Moore’s Law holds — as it has for roughly 50 years — computing power will continue to grow by a factor of 30 every decade, making AI systems exponentially more capable without any algorithmic breakthrough at all.

This relentless march of computational power has unlocked capabilities that were previously theoretical. The Royal Society’s landmark report Science in the Age of AI (May 2024) documents this transformation in detail, noting that AI applications can now be found across all STEM fields, with a particular concentration in medicine, materials science, robotics, agriculture, genetics, and computer science.

The Scale of Investment

The numbers underscore the magnitude of the shift. The Royal Society report estimates the global AI market at approximately £106.99 billion as of 2022. AI-related patents have surged, with 74% of all filings occurring in the last five years, and the compound annual growth rate projected at 37.3% through 2030. China contributes approximately 62% of the global AI patent landscape, with the United States at 13.2%. Meanwhile, WIPO data shows that more than 54,000 generative AI inventions were filed between 2014 and 2023, with over 25% emerging in the last year alone. The U.S. Department of Energy’s Genesis Mission, launched in November 2025, further signals the geopolitical stakes of AI-driven scientific discovery. (WIPO, 2024)

A Taxonomy of AI in Research

A comprehensive survey published on arXiv (Chen et al., 2025) offers a unified taxonomy for understanding how AI intersects with the research process. The authors identify five mainstream tasks:

- AI for Scientific Comprehension — extracting and understanding information from vast bodies of scientific literature

- AI for Academic Surveys — systematically reviewing and synthesizing research across fields

- AI for Scientific Discovery — generating novel hypotheses, running simulations, and identifying patterns in data

- AI for Academic Writing — drafting, formatting, and refining manuscripts with citations and figures

- AI for Academic Reviewing — automating and augmenting the peer review process

The survey notes a critical distinction between “AI4Science”. which targets specific scientific problems and experimental protocols, and “AI4Research”, which addresses broader research methodologies and academic infrastructure. As large language models (LLMs) such as OpenAI-o1 and DeepSeek-R1 develop more advanced reasoning capabilities, a unified workflow is emerging that can address both specialized scientific challenges and general research processes.

Emerging Architectures

New agent architectures are accelerating this convergence. The PaSa agent discovers literature by actively traversing citation networks; the ExSearch framework allows agents to continuously optimize search strategies through self-incentivization loops; and CuriousLLM employs a “curiosity-driven” mechanism to guide retrieval across knowledge graphs. These innovations point toward a future in which AI does not simply assist researchers but actively participates in the intellectual fabric of discovery (Chen et al., 2025) .

AI-Enhanced Simulations and Materials Discovery

At MIT, Gómez-Bombarelli’s lab exemplifies the potential of combining physics-based simulations with machine learning. His group has used AI to discover new materials for batteries, catalysts, plastics, and organic light-emitting diodes (OLEDs). He was among the first to use generative AI for chemistry in 2016 and one of the earliest adopters of neural networks for molecular understanding in 2015 (MIT News, 2026).

“Physics-based simulations make data, and AI algorithms get better the more data you give them,” Gómez-Bombarelli explains. “There are all sorts of virtuous cycles between AI and simulations”. His research group is entirely computational — they do not run physical experiments — but they collaborate closely with experimentalists and create computational tools that help laboratories prioritize AI-generated ideas.

The broader trend is unmistakable. Companies like Meta, Microsoft, and Google’s DeepMind now regularly conduct physics-based simulations that were pioneering just a decade ago. “AI for simulations has gone from something that maybe could work to a consensus scientific view”, Gómez-Bombarelli says. “We’ve seen that scaling works for simulations. We’ve seen that scaling works for language. Now we’re going to see how scaling works for science”.

Multi-Agent Systems: The New Research Assistants

Cambridge’s Agentic AI. The Infosys-Cambridge AI Centre, opened in autumn 2024, is organized around three research themes: AI-enhanced simulations, Mathematical AI (applying theoretical physics to understand how neural networks work), and Agentic AI systems designed to automate much of the scientific research process.

Dr. Boris Bolliet, the Centre’s Agentic AI Research Lead, develops custom multi-agent systems based on large language models such as ChatGPT, Claude, and Gemini. These systems — including CMBAgent and DENARIO — can plan and execute complex tasks ranging from financial simulations to cosmological data analysis and autonomous research paper writing (Cambridge Uni., 2025).

The multi-agent model breaks complex problems into smaller tasks, verifies outputs, and works like a team of digital research assistants. Bolliet assigns distinct roles or “personalities” to each agent: one might be a researcher, another an engineer, another an idea generator, and another a relentless critic — a “hater” whose job is to challenge and criticize every output to make the final product more robust.

“We want to use AI to accelerate the exchange of information across fields”, Bolliet says. “AI agents don’t have these barriers. They’re not stuck in one discipline like we human researchers are”. Crucially, multi-agent systems offer a form of transparency that single AI models often lack. “I can see every single step that has occurred and go through the code line by line”, Bolliet says. “I can reproduce the research entirely, which is not necessarily true when you talk to your colleagues and ask them what they did in their paper”.

Google’s AI Co-Scientist. Google has taken the multi-agent paradigm even further with its AI co-scientist, a system built on Gemini 2.0 and designed to function as a virtual scientific collaborator. The system employs six specialized agents — Generation, Reflection, Ranking, Evolution, Proximity, and Meta-review — that are inspired by the scientific method itself. Given a research goal described in natural language, the AI co-scientist generates novel hypotheses, detailed research overviews, and experimental protocols.

The results have been striking. In drug repurposing for acute myeloid leukemia (AML), the AI co-scientist proposed novel candidates that were subsequently validated in laboratory experiments: of five drugs tested, three successfully killed AML cancer cells at clinically relevant concentrations. For liver fibrosis, the system identified epigenetic targets with significant anti-fibrotic activity; the FDA-approved cancer drug vorinostat, suggested by the AI, was observed to reduce TGFβ-induced chromatin structural changes by 91% and promote liver cell regeneration — while neither of the human expert’s own candidates proved effective. In antimicrobial resistance research, the AI independently proposed a mechanism for bacterial gene transfer that matched experimental findings developed over nearly a decade (Gottweis & Natarajan, 2025).

Seven domain experts evaluated the system across 15 open research goals and found that the AI co-scientist outperformed other state-of-the-art reasoning and agentic models. These results demonstrate that AI is no longer merely automating existing tasks but actively contributing to the generation of novel, testable scientific knowledge.

Practical Tools for Researchers: Gatsbi as a Case Study

While the breakthroughs described above occur at elite institutions and major technology companies, a critical question remains: how can the broader research community — including early-career researchers, scholars at under-resourced institutions, and interdisciplinary teams — access these capabilities?

This is where platforms like Gatsbi become essential. Gatsbi is an AI-powered research assistant that brings the power of generative AI and multi-agent reasoning directly to the individual researcher’s desktop. Rather than requiring expertise in machine learning or access to supercomputing infrastructure, Gatsbi provides an intuitive interface that automates key stages of the research workflow.

Core Capabilities

Gatsbi is built around three intelligent agents that mirror the core activities of research:

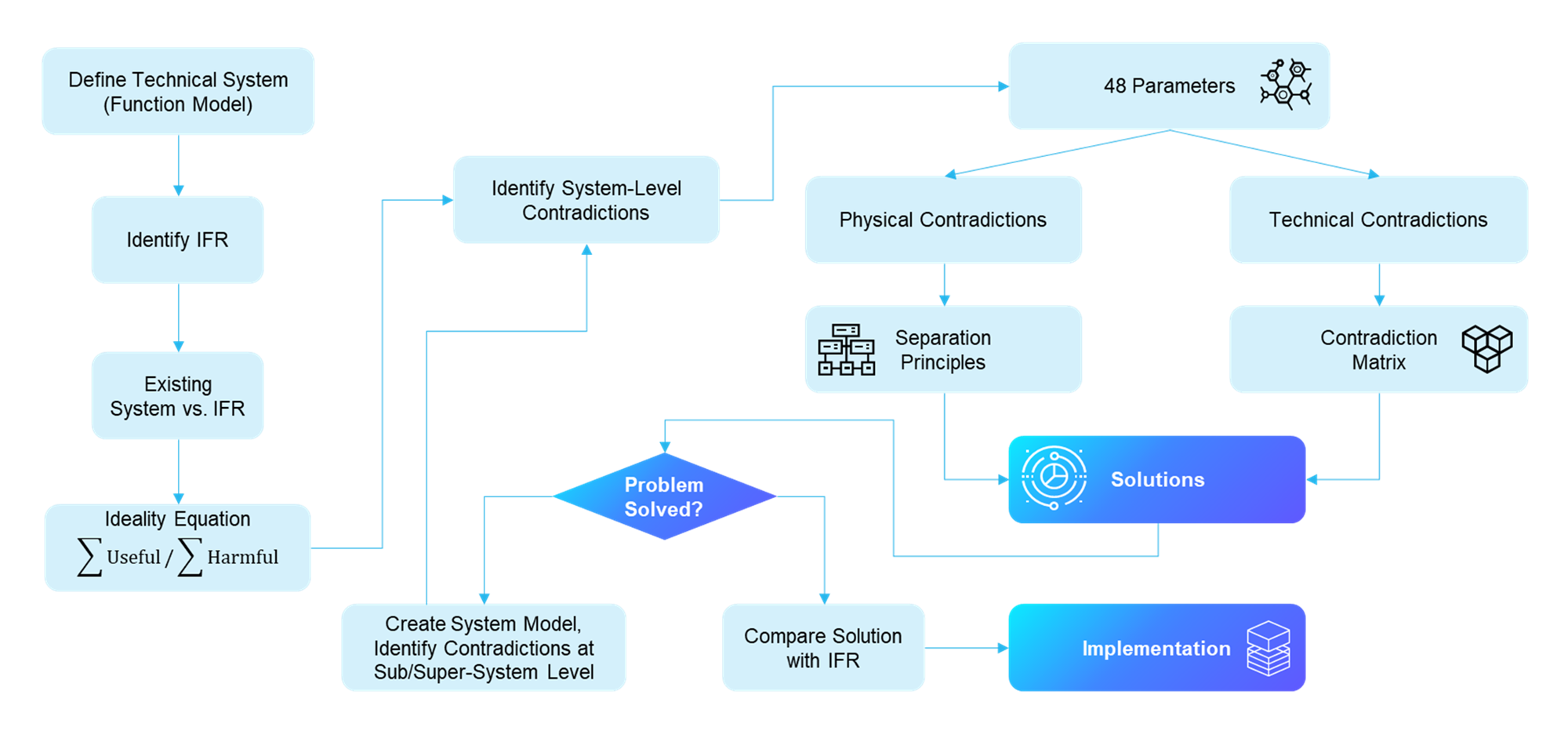

- Gatsbi Innovator — Researchers input a topic, and the system performs AI-driven systemic analyses: examining components, engineering parameters, contradictions, and research gaps. It then generates 10–20 inventive solutions, each accompanied by originality scores, feasibility evaluations, and relevant references from the latest academic literature. This mirrors, at an accessible scale, the hypothesis-generation capabilities demonstrated by Google’s AI co-scientist.

- Gatsbi Writer — From research instructions to structured manuscript drafts, Gatsbi Writer supports the academic writing workflow with tools for organizing citations, equations, figures, experimental tables, charts, and formatted references. With the integrated Deep Research Agent introduced in Gatsbi 3.0, users can collect and synthesize relevant evidence before drafting, making it easier to build literature-grounded arguments and coherent research manuscripts. Documents can be exported in Word, LaTeX, or Markdown and formatted in APA, IEEE, Harvard, Chicago NB, or AMA citation styles.

- Gatsbi Reviewer — For systematic literature reviews and meta-analyses, Gatsbi automates study screening, data extraction, and synthesis. It generates analytical dimensions, forest plots, funnel plots, heterogeneity measures, and publication-quality meta-analysis manuscripts.

Beyond Writing: Innovation and Patent Support

Gatsbi extends beyond academic writing to support parts of the innovation documentation workflow. It assists users in preparing invention disclosure drafts in 11 languages, including technical background summaries, invention descriptions, implementation examples, embodiment descriptions, drawing descriptions, and claim-style outlines for further professional review.

This capability is designed to help researchers, engineers, and R&D teams organize technical ideas more efficiently before consulting qualified patent professionals. Gatsbi does not provide legal advice, patentability opinions, freedom-to-operate analysis, or patent prosecution services. Users remain responsible for verifying all technical and legal content and for obtaining professional advice where required.

Positioning in the AI Research Tool Landscape

Compared to other AI writing assistants, Gatsbi distinguishes itself through its focus on the complete research lifecycle — from ideation to drafting to review — rather than treating each stage in isolation. While tools like Paperpal focus primarily on grammar and editing, and SciSpace on literature management, Gatsbi integrates technical elements such as auto-generated figures, LaTeX equations, and experimental charts alongside full paper drafting and patent writing. This integrated approach aligns with the broader trajectory described in the AI4Research survey, where unified workflows are emerging to address both specialized scientific challenges and general research processes.

The Peril: Challenges and Risks

As the UNESCO interview with Royal Society representatives makes explicit: “Care needs to be taken not to become over-reliant on opaque AI-based research, as this could undermine the reliability of scientific findings and their practical application. This, in turn, would undermine trust in science”. The black-box nature of advanced deep learning models means it is often challenging to explain how a model reaches a decision — and, like humans, AI makes errors (UNESCO, 2024).

Ethical and Safety Concerns

Potential risks extend beyond reproducibility and environmental impact. The Royal Society identifies dangers including data poisoning, the spread of scientific misinformation through AI-generated text, and the malicious repurposing of AI models. The report calls for the establishment of domain-specific taxonomies of AI risks, particularly in sensitive fields such as chemical and biological research. UNESCO echoes these concerns, recommending that open science, environmental, and ethical frameworks be established for AI-based research to ensure findings are accurate, reproducible, and supportive of the public good.[

The Pressure to Be “Good at AI” Rather Than “Good at Science”

An underappreciated risk is cultural: the Royal Society warns that the significant potential to advance discoveries using complex deep learning models may encourage scientists or funders to prioritize AI use over rigor, or to be “good at AI” rather than “good at science”. This subtle shift in incentives could erode the integrity of the scientific method if not carefully managed through institutional norms and funding structures.

Conclusion

AI is not merely a new tool in the researcher’s toolkit — it represents a paradigm shift in the nature and method of scientific inquiry. From the multi-agent systems being developed at Cambridge and Google to the high-throughput simulations at MIT, to the accessible, all-in-one research platform offered by Gatsbi, the transformation is already underway.

The potential is extraordinary: AI can generate novel hypotheses, traverse disciplinary boundaries, automate repetitive tasks, and accelerate the pace of discovery in ways that were unimaginable a decade ago. Yet this potential comes with real risks — to reproducibility, to equity, to the environment, and to the integrity of the scientific method itself.

As Fergusson at Cambridge puts it: “There is a transformational opportunity to change the way scientific research is done using AI. It will no longer be something only humans can do, but something we do in collaboration with machines, that can carry out high-level scientific analysis, fast and accurately”. The future of research, it seems, will belong to those who can ask the best questions — and who have the wisdom to ensure that the answers are trustworthy, equitable, and in service of the public good (Drolet et al., 2022).